Late yesterday night, I completed the math and code to adjust my display high precision colorimeter measurements with spectral readings taken with a spectrophotometer.

During #MWC15 , I was going booth to booth with both a X-Rite I1 Display Pro colorimeter, that's particularly quick and a EFI-ES1000 spectrophotometer (same as a X-Rite i1 Pro), connected alternatively to the tablet running my software.

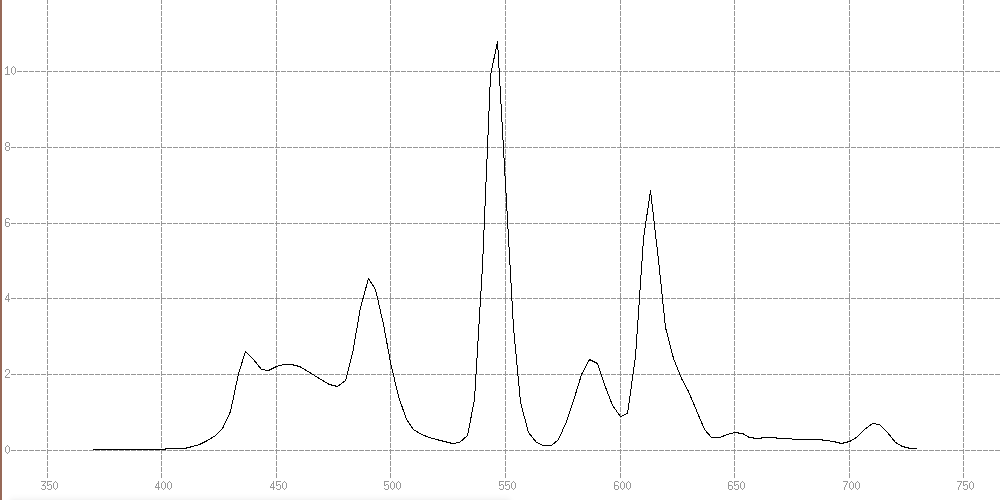

The spectrophotometer is about 3 times slower, but has the merit to see the light wavelengths intensities while the colorimeter uses some kind of RGB sensor that's able to tell the real colors only if their spectral characteristics match what it has been optimized for.

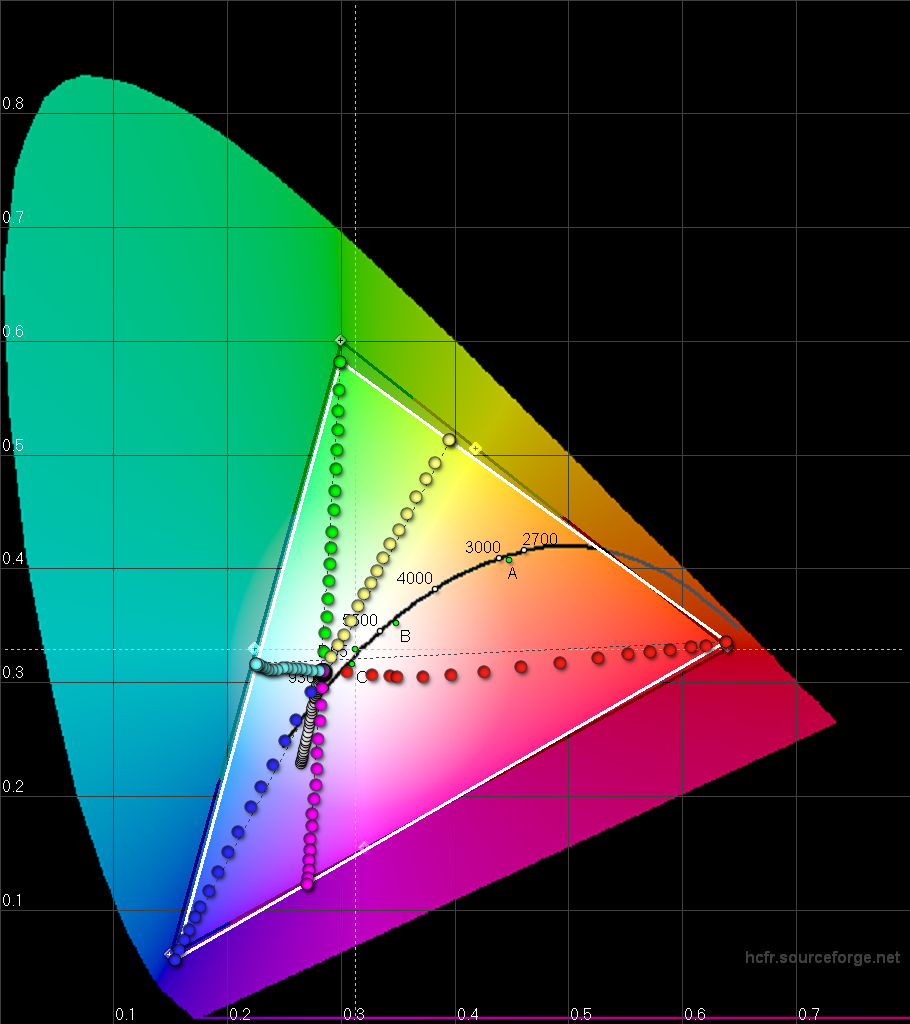

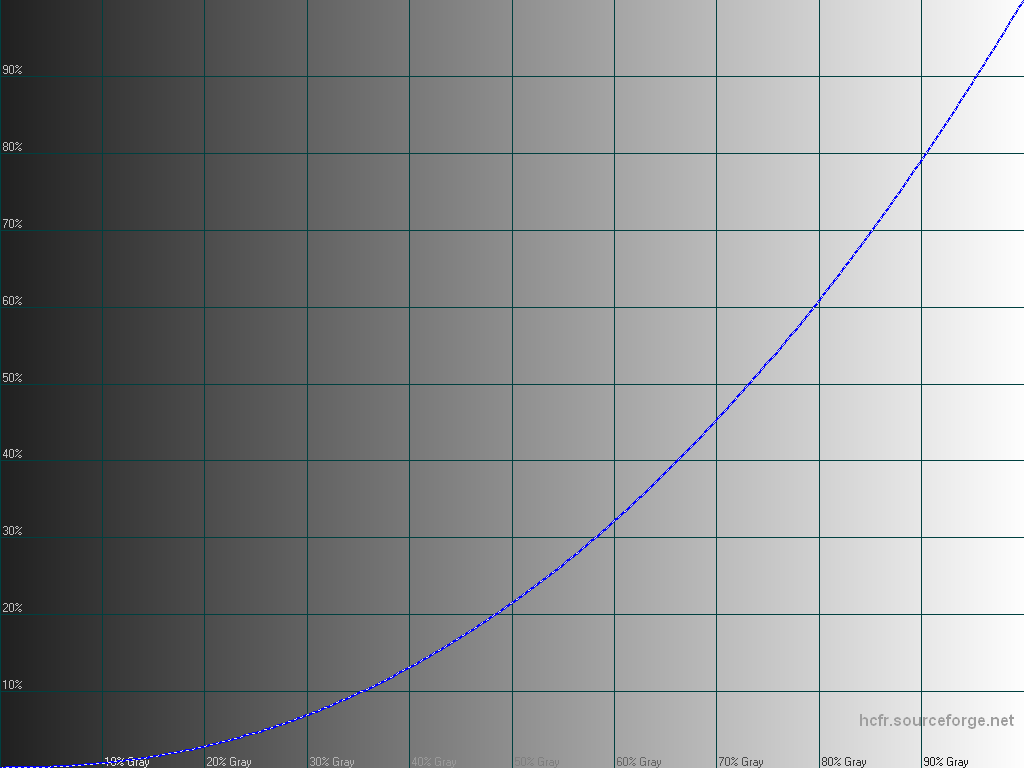

The correction logic was not implemented until now, so here are graphs from a +HTC One M9 unit, before and after correction.

Not only the spectrophotometer doesn't see the same thing, but these measurement confirm why you've heard reviewers mentioning a ''green tint" on the M9.

It's indeed here, and our eyes are very sensitive to an excess of green. We tend to be less bothered by wrong amounts of red and blue however. The M9 display also has too much blue and not enough red.

#progress #supercurioBlog #color #display #calibration #development

In Album Have correction from spectral readings now

Source post on Google+