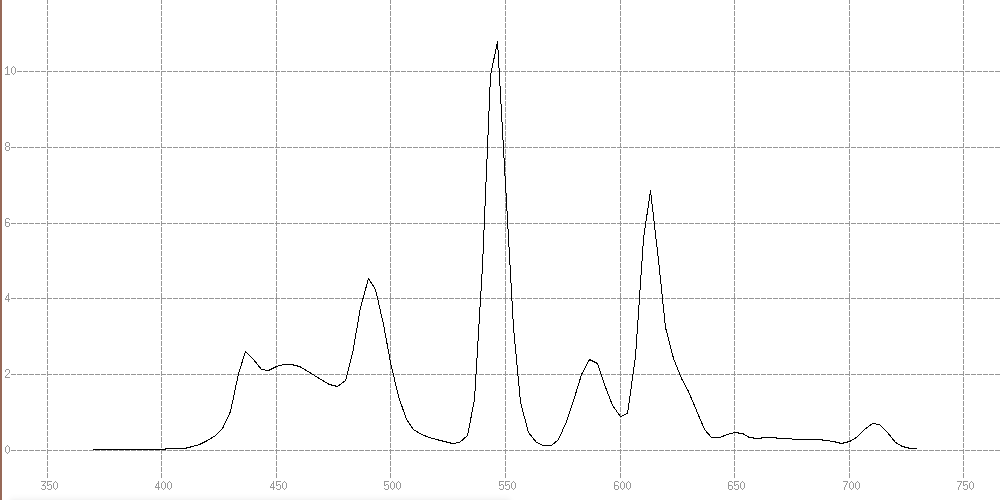

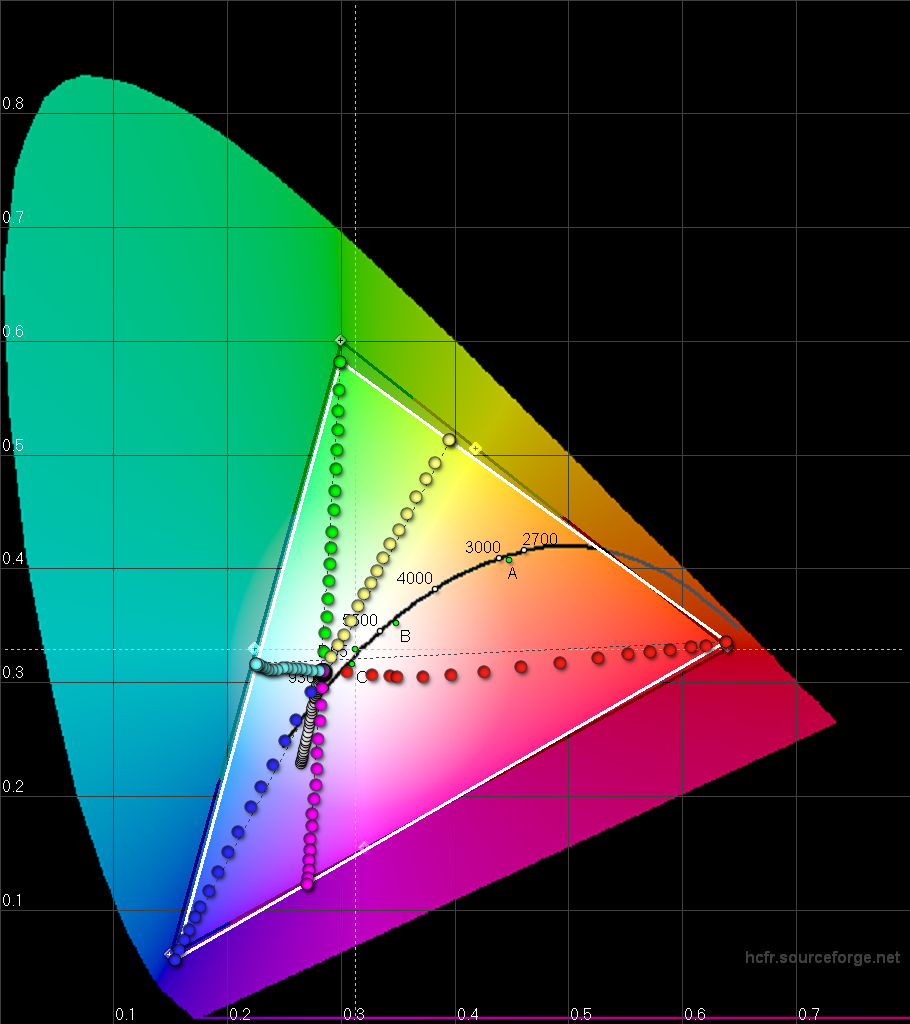

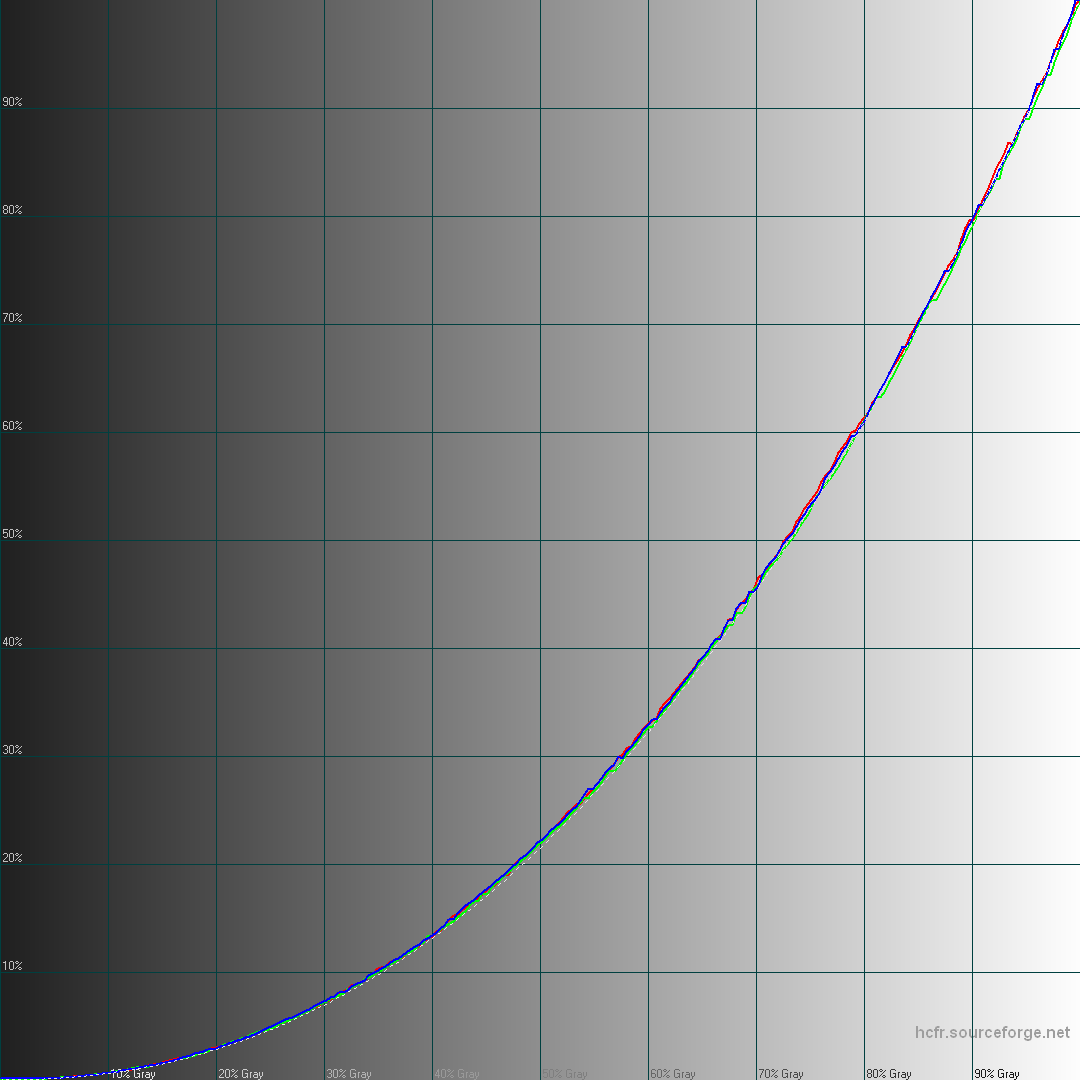

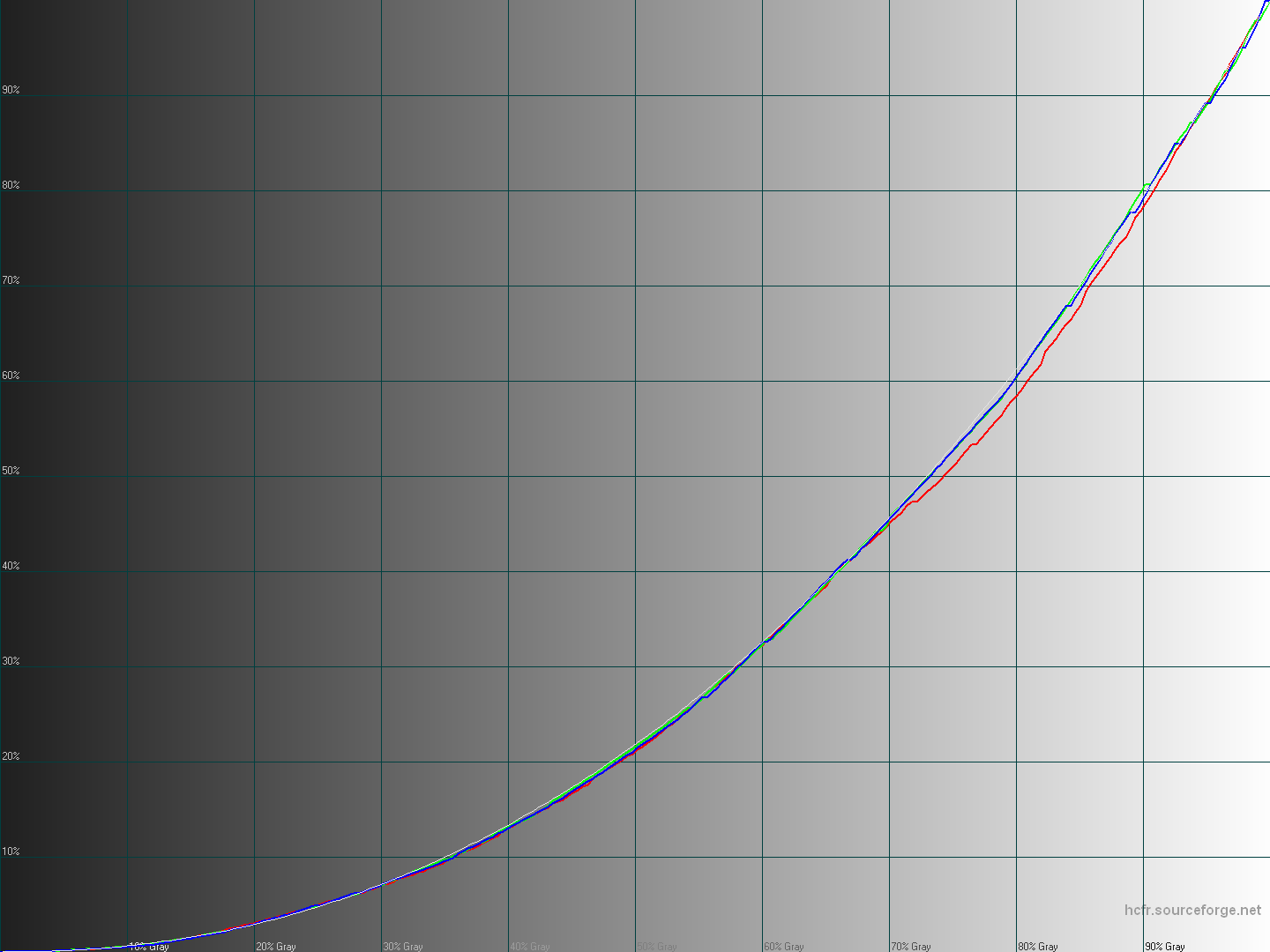

Results are good 🙂

Also the approach is completely different from everything I saw so far.

Attached: D65 calibration on Nexus 7 (2013): very first results

I take all the measurements I need first and then everything can be done with calculations.

Usually auto calibration algorithms measure various kind of color patches that I can't justify, then try to improve their vastly interpolated early results by several optimization pass.

Not sure why they do that as it seems inefficient. Maybe those algorithms were designed with different goals in mind than mine.

Like if you're not sure of what the hardware will do with your profile, so you load it, retry, again and again.

But it means you're not measuring correctly to begin with or working with inconsistent and unpredictable hardware.

A huge benefit of my approach seems to be the accuracy first, and also you can tune the algorithm parameters all you want without taking any new measurement (which takes a vast amount of time).

Today I'm adding black point compensation strategies in it in order to provide a smooth gradation near black instead of clipping at rgb (10, 10, 15) on Nexus 7 (2013) when targeting a Gamma 2.2 response curve with pure black output.

And I'm having a lot of fun doing this!

I was really not sure I would be able to make this auto calibration thing, thinking I was too limited in my maths skills.

But it seems to be no issue even if it took quite some time to turn to code the theoretical concept I had in mind.

Back to code 🙂

#supercurioBlog #calibration #display #color #development

In Album Very first auto calibration algorithm results

Source post on Google+